Stop Hiring a Storyteller to Build a Bridge: The Critical Difference Between LLMs and Specialized AI

If you walked into a construction site and saw the foreman handing the blueprints to a poet, you would run. You wouldn’t wait to see if the building stood up. You wouldn’t ask to see the poet’s previous rhymes. You would know, instinctively, that you were witnessing a disaster in the making.

A poet can describe a bridge beautifully. They can make you feel the sway of the wind against the cables. They can write a moving speech for the opening ceremony. But if you ask them to calculate the load-bearing capacity of a steel beam, people are going to get hurt.

Right now, in boardrooms and IT departments across the globe, smart people are doing exactly this. They are hiring the poet to do the engineer’s job.

We are seeing a massive conflation of terms. To the average executive, "AI" is a monolithic magic button. It is all just "the computer thinking." But as security professionals and IT leaders, we have to be better than that. We have to understand the tool we are holding before we try to fix the engine with it.

The biggest mistake companies are making right now is treating all AI the same. They aren't.

If you are paying for a Large Language Model (LLM) like ChatGPT when you actually need the finished, calculated product of a Specialized AI, you are wasting budget and introducing massive, uncalculated risk into your environment.

This distinction keeps me up at night. Because when you ask a chatbot to monitor a live network for threats, you aren't getting an analysis. You are getting a story. And in cybersecurity, stories don't stop hackers. Determinism does.

The Two Buckets: The Guesser vs. The Doer

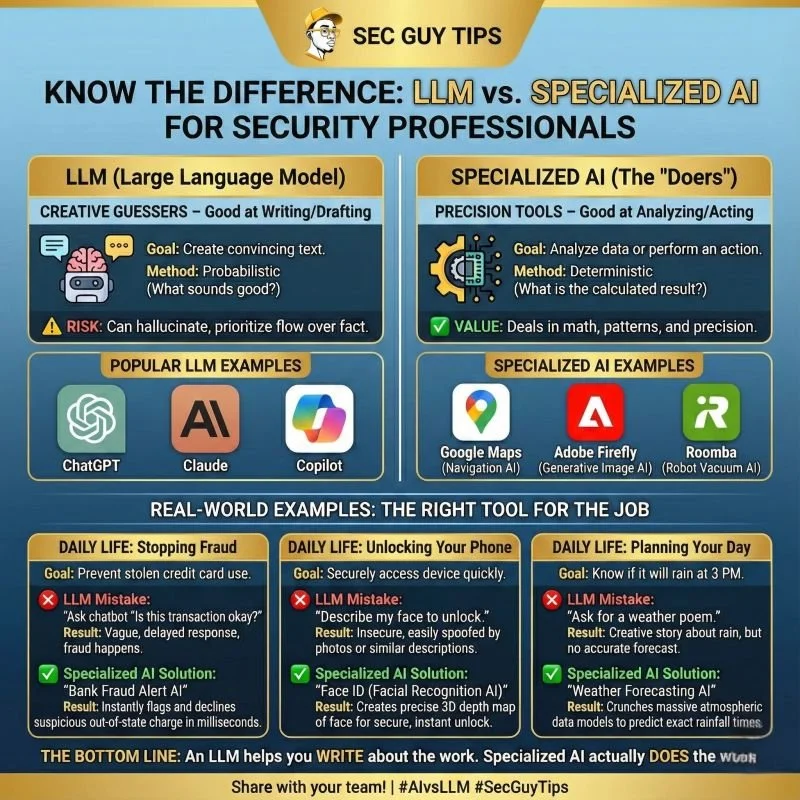

To understand why this matters, we have to strip away the marketing fluff and look at the architecture. We need to categorize our tools correctly. In my view, and in the "Sec Guy" framework, AI falls into two distinct buckets: The Creative Guessers (LLMs) and The Precision Doers (Specialized AI).

The Creative Guesser (LLM)

Large Language Models, the technology behind ChatGPT, Claude, and Gemini, are probabilistic engines.

That is a fancy way of saying they are professional gamblers. They don't "know" facts in the way a database does. They don't "calculate" answers in the way a calculator does. They predict the next most likely token (word or part of a word) in a sequence based on the massive amount of text they were trained on.

Their Goal: To sound convincing. Their Method: Probabilistic (What sounds good? What usually comes next?) The Risk: They prioritize flow over fact.

Think of an LLM as the smooth talker at a party. They know a little bit about everything. If you ask them about quantum physics, they can string together sentences that sound like quantum physics. They can mimic the cadence of a professor. But they aren't doing the math; they are doing an impression of someone doing the math.

The Precision Doer (Specialized AI)

Specialized AI, on the other hand, is built for a specific, narrow purpose. These models are often deterministic or highly constrained. They aren't trying to write a poem about traffic; they are crunching millions of data points to find the fastest route from point A to point B.

Their Goal: To analyze data or perform an action. Their Method: Deterministic (What is the calculated result?) The Value: They deal in math, patterns, and precision.

These are the silent workhorses. They are the "engineers" of the AI world. When you unlock your phone with your face, you aren't asking it to write a description of your nose. You are using a specialized computer vision model that maps 30,000 infrared dots to create a mathematical depth map of your face. It is a binary pass/fail. It works, or it doesn't.

The "Swiss Army Knife" Fallacy

The problem arises when we try to use the Guesser to do the Doer's work.

I see this constantly in the security space. A CISO reads a headline about AI and tells their team, "We need to use GenAI to automate our SOC."

So, a junior analyst fires up a prompt: "Analyze these 5,000 log lines and tell me if we are under attack."

The LLM looks at the logs. It sees patterns that look like attacks it read about in its training data. It confidently replies: "Yes, IP 192.168.1.5 is performing a SQL injection attack."

The analyst panics. They block the IP. The CFO calls screaming because the payroll server just went offline.

What happened? The LLM hallucinated. It saw a string of text that resembled a SQL query and, trying to be helpful and "complete the pattern," it labeled it an attack. It didn't actually parse the syntax or check the server response codes. It just told a convincing story about a hack.

A Specialized AI (like a modern SIEM or EDR heuristic engine) would handle this differently. It wouldn't "read" the logs like a novel. It would mathematically analyze the deviation from baseline traffic. It would flag the behavior based on rigid rule sets or anomaly detection algorithms that have been fine-tuned on millions of actual attacks, not just text about attacks.

Case Studies: Daily Life vs. Enterprise Risk

To really drive this home, let’s look at the examples from the infographic I shared recently. These are tools we use every day, and they perfectly illustrate why the "right tool for the job" matters.

1. Stopping Fraud (The Stakes are High)

The Daily Life Scenario: You are in a different state, buying gas. You swipe your card. The Goal: Prevent stolen credit card use while allowing legitimate transactions.

The LLM Approach (The Failure): Imagine if Visa used a chatbot for this. You swipe your card. The system asks the bot: "Is this transaction okay?" The bot thinks: "Well, usually Shun buys gas in Washington. But people travel. Travel is fun. Gas stations have snacks. This seems like a narrative arc where the protagonist goes on a road trip. Yes, authorize it." Result: Vague logic. Slow response. Fraud happens because the bot prioritized a plausible story over hard data.

The Specialized AI Approach (The Solution): Visa uses "Bank Fraud Alert AI." This is a specialized anomaly detection model. It crunches the geolocation, the time of day, the amount, the purchase velocity, and your device ID in milliseconds. It compares this against a rigid historical profile of your spending. Result: It instantly flags the out-of-state charge because the math didn't add up. It declines the card before the pump even activates.

The Enterprise Translation: In your business, this is your Identity Access Management (IAM). You do not want an LLM deciding who gets into your servers based on a "vibes check" of their login request. You want a specialized Conditional Access policy that mathematically verifies device health, IP reputation, and MFA tokens.

2. Unlocking Your Tech (Security vs. Convenience)

The Daily Life Scenario: You pick up your iPhone. The Goal: Securely access the device instantly.

The LLM Approach (The Failure): You hold the phone up. The LLM tries to describe you. "I see a man. He looks tired. He has a beard similar to the owner. He is wearing a hat. This fits the description of the user." Result: Insecure. Someone holding a photo of you, or even a person who looks vaguely like you, could spoof it. The description is too broad.

The Specialized AI Approach (The Solution): Apple uses FaceID. This is a dedicated neural engine processing 3D depth data. It doesn't care if you look "tired." It cares if the distance between your cheekbone and your nose bridge matches the encrypted hash stored in the Secure Enclave to within a fraction of a millimeter. Result: Precise. Deterministic. Secure.

The Enterprise Translation: This is your Biometric Authentication and Physical Security. We cannot rely on "generative" evaluations for access control. Access must be binary. You are either you, or you are not. There is no "close enough" in security.

3. Predicting the Future (Planning vs. Dreaming)

The Daily Life Scenario: You have a family BBQ planned for Saturday. The Goal: Know if it will rain.

The LLM Approach (The Failure): You ask a chatbot, "Will it rain on Saturday?" The bot accesses its training data (which cuts off months ago) or hallucinates based on the concept of "Spring." It might say, "April showers bring May flowers, so it is likely to be wet!" Result: A nice creative story about rain, but zero meteorological accuracy.

The Specialized AI Approach (The Solution): Weather Forecasting AI. These systems ingest terabytes of atmospheric pressure readings, wind vectors, and satellite imagery. They run massive simulations on supercomputers. Result: It predicts rainfall start times within a 15-minute window.

The Enterprise Translation: This is your Threat Intelligence. You don't want a report that says, "Hackers are often bad in Q4." You want predictive analytics that say, "Based on current dark web chatter and CVE exploit velocity, there is a 94% probability your unpatched Exchange server will be hit in the next 48 hours."

The Hidden Cost of the "Storyteller"

So, why does this distinction matter to your budget? Why is this a "Sec Guy" topic and not just a tech philosophy debate?

Because Hallucinations are expensive.

When you hire a "Storyteller" (LLM) to do an "Engineer's" job, you are introducing a layer of clean-up costs that you haven't budgeted for.

The Cost of Verification: If you use an LLM to generate code, your senior developers (who cost $150/hour) now have to spend hours reviewing that code line-by-line to ensure the bot didn't invent a library that doesn't exist. You haven't saved time; you've just shifted the workload from "writing" to "debugging."

The Cost of False Positives: If you use a generic AI for security monitoring, your SOC team is going to burn out chasing ghosts. Every hour they spend investigating a "hallucinated" threat is an hour they aren't hunting real adversaries. Alert fatigue is real, and LLMs are the kings of generating noise.

The Cost of Data Leakage: Specialized AI is often local or containerized. Your Roomba doesn't send a map of your house to the public internet to train a global model. But public LLMs? They are hungry. Pasting sensitive proprietary data into a public "Guesser" is a one-way ticket to a data breach.

How to Build a Real AI Strategy (The "Sec Guy" Framework)

I am not saying LLMs are useless. Far from it. I use them every day. But I use them for what they are good at: Language.

Here is the simple rule of thumb for your IT strategy:

Use an LLM when:

You need to draft a policy document.

You need to summarize a 50-page incident report into a 3-paragraph executive brief.

You need to explain a complex technical concept to a non-technical stakeholder.

You need creative brainstorming for a phishing simulation campaign.

Use Specialized AI when:

You are making a decision that involves money or security access.

You need 100% accuracy on math or logic.

You are monitoring live systems for anomalies.

You are authenticating a user.

The Bottom Line

We are in a gold rush. And in a gold rush, the people selling the shovels make all the money. Right now, everyone is trying to sell you the "LLM Shovel" for every single hole you need to dig.

But sometimes, you don't need a shovel. Sometimes you need a scalpel. Sometimes you need a laser. Sometimes you need a bulldozer.

Don't let the hype cycle blind you to the limitations of the tech. An LLM helps you WRITE about the work. Specialized AI actually DOES the work.

As we move into this new era of SecAI+, the professionals who command the highest salaries won't be the ones who just "know AI." It will be the ones who know which AI to deploy. It will be the architects who can look at a problem and say, "That’s a job for a deterministic model, not a language model."

Be the architect. Be the engineer. And leave the storytelling to the marketing department.

Stay secure. – Sec Guy